Google’s Amazing New Satellite Imagery Will Help Researchers, Too

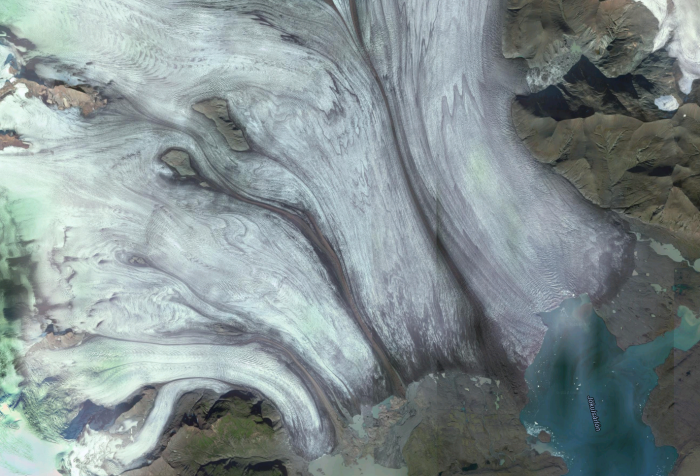

Google Earth has improved a lot over the last decade, and it just got its biggest shot in the arm yet: more than 700 trillion individual pixels with which to render the surface of our planet in exquisite detail.

Not that you can see all those pixels. Because Google actually works with such a huge data set, acquired by NASA and the United States Geological Survey’s satellite Landsat 8, it replicates the same view of the planet many times over. That redundancy allows Google to pick and choose from the images, creating a mosaic of Earth that’s cloud-free, with “greater detail [and] truer colors” than before.

It’s not the first time Google has produced a clear view of the planet. It did so three years ago with data from Landsat 7. But because of a hardware fault, that satellite provided incomplete data, which meant that the images had to be processed more heavily and then stitched together—leaving the strong diagonal lines you’ll have seen on Google’s satellite image during the last three years.

All this processing—including the stitching together of the images from Landsat 8—actually takes place in Google’s publicly available Earth Engine. While Google Earth is nice for most folks to casually browse, Earth Engine where the real action is. Unlike Google Maps, it provides all kinds of information, allowing users to dive into the data: there are radar views from ESA’s Sentinel-1 satellite; environmental data about land and crop cover; climate records about the atmosphere and surface temperature; and even human data about population and malaria prevalence.

Researchers have used Earth Engine to build some impressive applications. Beth Tellman ofArizona State University, for instance, has developed Cloud to Street, a tool that maps flood vulnerability. A team from UC San Francisco built a tool that shows where malaria is likely to be transmitted, based on data such as rainfall and vegetation coverage. And the Sage Grouse Initiative is using Earth Engine to create conservation indicators it can use to ensure that ranching across western America is sustainable.

The new imagery, then, will only serve to make the analysis carried out by researchers more accurate than ever. Though you can still check out where you’re going on holiday if you want to—we won’t tell.

(Read more: Google, The Atlantic)

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.